LawGeex, a developer of AI software to review contracts, today released a study that found that AI beats lawyers in the accuracy of reviewing NDAs (non-disclosure agreements).

The LawGeex infographic is at https://www.lawgeex.com/AIvsLawyer/ and you can also download the 37-page PDF study from that page. It’s worth reading. Richard Tromans has a summary and some comments at his post today, LawGeex Hits 94% Accuracy in NDA Review vs 85% for Human Lawyers.

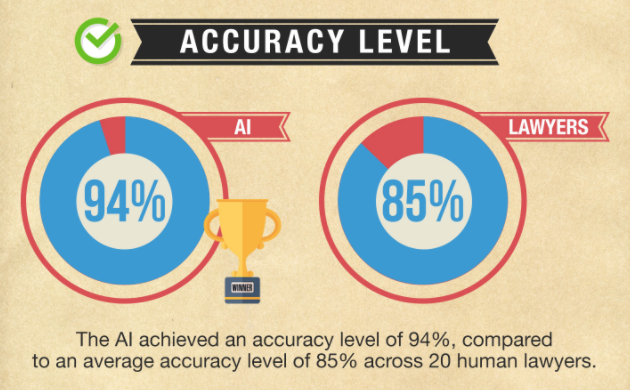

Since LawGeex and Richard both summarize the study, I will cite here only the key finding: that the AI achieved 94% accuracy versus 85% for a group of 20 experienced lawyers. The percentage is the F-measure, which combines recall and precision. This is the standard statistical measure for this type of comparison. Anyone who has followed the discussion of predictive coding in document review in eDiscovery will recognize that metric.

The study is just out and I am commenting without leaving time to let it sit. Plus, I suspect others will comment on it as well. So my views may change but here are my initial thoughts:

- That Machines Beat Lawyers Is Not Surprising. Many lawyers may be surprised that the AI won; I am not. I started comparing human versus machine performance in discovery document review in the early 1990s. Even then, with an earlier generation of natural language processing operating against text generated by OCR, it was clear then that the machines often performed better. A decade later, the predictive coding discussion in eDiscovery document review began in earnest – and continues. Many studies have found predictive coding is more accurate than lawyers.

- Courts Not a Barrier to Adoption Here. Predictive coding uptake has been slowed down by the need for judicial review and acceptance, a process that takes years. In contrast, for NDA reviews, in-house counsel get to decide on their own what approach to use. They don’t have to persuade a court. (I am sure some will say, “well what if something was missed and it leads to litigation”. My answer: so what? Whatever litigation has arisen over NDAs to date is the result of human action – or perhaps failure in action.)

- A Move to Evidence-Based Decisions re How Lawyers Practices. The way lawyers practice is based on history and precedent. Rarely do we have evidence to support one practice approach versus another. Though some may find flaws in the study, I applaud it for providing what appears to be well-grounded evidence. The predictive coding acceptance battles teach us that controversy over study design may arise. Good. That’s how science and empirical testing is supposed to work. If you do not think one study is properly conducted, do your own. Or point out the flaws and hope someone else fixes them.

- Inter Reviewer Variability and a Gold Standard in Studying Outcomes. This point is a bit of a detailed note on study design and methods. Lawyers often overlook the fact that expert lawyers often disagree about how to interpret legal materials. That’s a big problem when studying outcomes. Contrast that with medicine, where most clinical trials use objective measures to determine treatment efficacy. Those measures are typically reproducible and consistent. And a clinical trial typically compares a new treatment to the existing “gold standard” (widely accepted though not always evidence-based best practice). With direct comparison of two approaches and objective measures, successful clinical trials usually provide meaningful and actionable results. Law lacks this approach. On reason is that the legal market has little ethos of taking an evidence-based approach to how it practices. Another reason is that the legal gold standard – humans doing the work – is highly variable and far from objective. At minimum, I’d say the LawGeex study worked hard to overcome these two limitations. (I have written about one dozen blog posts that least touch on the gold standard issue in law.)

- An Unexplored Issue – Opportunity to #DoLessLaw? While I am not surprised that AI beats lawyers, I am disturbed by the result in one specific way: do we really need so much complexity and variability in NDAs? Lawyer accuracy is lower than the machine’s, I believe, because NDAs have so many variations both in what they seek to accomplish and the language they use to do it. Do the results here show that we have constructed too complicated a system? Could individuals and organizations protect their confidential information with simpler, more standardized agreements? Lawyers love their complexity but perhaps an approach that simplifies and standardizes would serve business and society better.

Archives

Blog Categories

- Alternative Legal Provider (44)

- Artificial Intelligence (AI) (57)

- Bar Regulation (13)

- Best Practices (39)

- Big Data and Data Science (14)

- Blockchain (10)

- Bloomberg Biz of Law Summit – Live (6)

- Business Intelligence (21)

- Contract Management (21)

- Cool Legal Conferences (13)

- COVID-19 (11)

- Design (5)

- Do Less Law (40)

- eDiscovery and Litigation Support (165)

- Experience Management (12)

- Extranets (11)

- General (194)

- Innovation and Change Management (188)

- Interesting Technology (105)

- Knowledge Management (229)

- Law Department Management (20)

- Law Departments / Client Service (120)

- Law Factory v. Bet the Farm (30)

- Law Firm Service Delivery (128)

- Law Firm Staffing (27)

- Law Libraries (6)

- Legal market survey featured (6)

- Legal Process Improvement (27)

- Legal Project Management (26)

- Legal Secretaries – Their Future (17)

- Legal Tech Start-Ups (18)

- Litigation Finance (5)

- Low Cost Law Firm Centers (22)

- Management and Technology (179)

- Notices re this Blog (10)

- Online Legal Services (64)

- Outsourcing (141)

- Personal Productivity (40)

- Roundup (58)

- Structure of Legal Business (2)

- Supplier News (13)

- Visual Intelligence (14)